Morning. 6AM. A message arrives on my WhatsApp from a conversation named Minato, a roughly 1,000-word briefing on the latest news in world affairs, science, and AI. Minato is my personal AI assistant built with OpenClaw, an open-source local AI agent framework, living on my iMac in my study room, powered by Gemini and Claude. I named it Minato, the Fourth Hokage from Naruto, known for his speed and precision. The name felt right for something that moves fast and works quietly in the background. Every morning without fail, it compiles and delivers my briefing before I’ve made my first cup of coffee.

Last December, I was writing about a “futuristic scenario” where I could simply chat on my phone and get a project initiated, code written, run and debugged, results produced, and figures delivered to me, all without being physically at my working machine. For example, to build a python-based software that can organize and synthesize my news pieces, and overtime, build a database that can be mined and visualized. Just over a month later, that futuristic imagination is already a reality. Besides sending me the morning news, Minato also saves the news to a dated file and archives them. I can direct it to write an analytic script right now but I will wait for the end of the year. It feels exciting. But if I let my imagination run a little further, it also terrifies me.

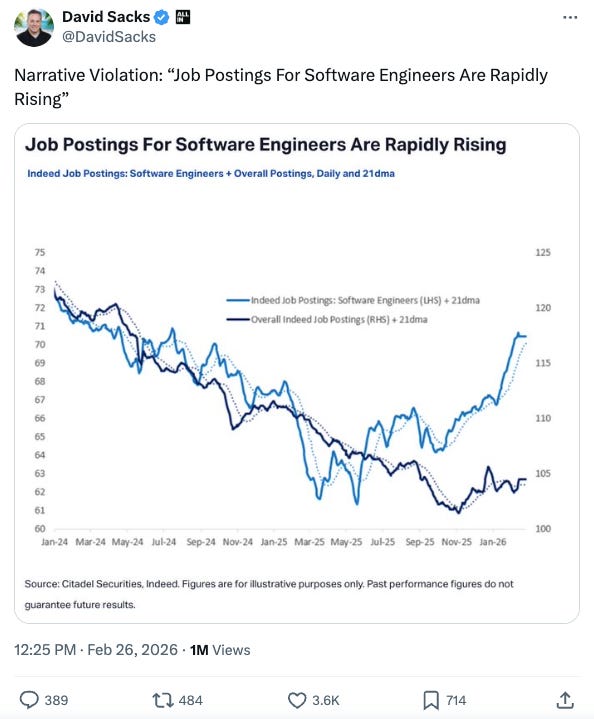

Code agents, specialized large language models designed for coding, are progressing rapidly and stampeding everything in their path. If you watch financial markets, after the joint release of Claude Code Opus 4.6 and Codex 5.3, the IT and enterprise sector fell hard. I think the fear is at least half true. But on the other hand, the job market for software devs goes up, as shown in the Twitter charts by David Sacks. Similarly, vibe coding startups are growing at lightning speed. See the report on Lovable, an Europe startup, At last, reasons to be cheerful about European tech by the Economist. People fear that code agents will shed jobs, but for the right person, they turn a software engineer into a productive team.

After reading my morning briefing, I sit down at my working machine and check what my multiple running Codex (OpenAI’s code agent) and Claude Code (Anthropic’s code agent) instances have written in the terminals overnight. I review them, evaluate their progress, then set them on to the next tasks, ranging from rolling out my newly trained graph neural network models based on a shell script template, to using git to version-control a successfully developed feature and releasing a new version with comprehensive documentation, to launching another batch of simulations sampling 100 parameter combinations across 20 available CPUs, to simply saying: They did a good job.

Then I open the Codex App, pull up my conversation, and ask it to show me today's to-do list from a living WORK.md document, a file that tracks my todos, things done, ideas, and project progress in real time. Whenever something gets done, a fresh idea sparks, or any progress is made, I just type it into the conversation, and Codex follows my rules to merge, track, and document everything in WORK.md. I imagine this will make my life considerably easier when annual report season comes around. I certainly can't afford a personal assistant. But Minato and Codex are the closest thing I've got.

This isn’t strictly coding anymore. And the companies building code agents have noticed that people increasingly use them not just to write code, but to handle tedious workflows and personal organization. I recommend the recent episode of Lenny’s Podcast featuring Boris Cherny, creator and head of Claude Code at Anthropic, titled “What Happens After Coding Is Solved” (February 19, 2026).

After I get a review of my todos, I tell Codex App my two or three priorities for the day. I make my coffee, and eat breakfast. The day begins, and it feels wonderful.

10AM. For the old me, the day would have just started. Now, a lot has already been done. Sometimes the pace of progress feels so surreal that it’s hard to believe Claude Code, the starter of all this amazing and terrifying momentum, was launched barely a year ago. A few years ago, if you asked a chatbot to do basic arithmetic, it couldn’t get it right. People joked about it. Fair entertainment. But if you’re still making that joke now, you’re the punchline. What really matters is the rate of iterations happening in the development and deployment of these new capabilities. This is no longer a six-month or yearly cycle. It moves by the month, sometimes by the week.

In the interview, Boris mentions that 4% of all public GitHub commits now come from Claude Code. Claude Code has become a prolific author of public code. Boris says that projected figure could reach 20% within a few years. And if you count private repositories, the share would be even higher. I believe it completely, because I’ve personally built multiple pieces of software for my private use with Claude Code, and that’s a lot of lines never counted in any public statistic.

Howard Marks, co-chairman of Oaktree Capital Management, published a memo on February 26, 2026 titled “AI Hurtles Ahead.” I’ve long admired Howard as a thinker and writer navigating this complex, fast-moving world. He probably doesn’t code much, but what struck me most was his discussion of the economic impact of code agents, drawn after just a handful of uses. People debate whether machines are truly thinking, truly intelligent. Interesting questions, perhaps, but maybe too academic. The real economic question is this: “If a code agent can deliver a report equivalent to what a $200,000 analyst produces, does whether the machine “thinks” still matter?” But also, if the code agent can deliver the reports of a highly paid analyst, how important were those reports that they can now be automated away? Perhaps those reports themselves were the friction point that AI is solving.

I’ve been using code agents to develop prompts that query business performance and produce equity analytic reports. What I can say is that the breadth of search, the range of sources, is something I simply cannot compete with alone. The report quality is okay, not excellent yet, but solid enough, with sufficient factual data and tables to use wisely. If you have followed a business for years, you can tell its quality. For example, this report on Estee Lauder. And crucially, you can run as many such deep research tasks as you have tokens for. Whoever controls the tokens controls the information.

That brings me to the second striking insight from Howard’s memo. With information barriers lowered, if not demolished, by AI tools, where do we create value? In financial markets, short-term gains will be harder to come by, but long-term opportunities will remain plentiful, because most people still chase short-term results. As Charlie Munger, the late Berkshire Hathaway vice chairman liked to quote, “Always take the high road, it is far less crowded.” AI will smash you in competing in the short-term, zero-sum game requiring the scope and speed of information, but may not yet be able to truly “vision” far into the future.

For academic work, we face a similar reckoning.

The deep research function is now available in Claude Code (it also has an extended thinking toggle) and Gemini Pro. I’ve been experimenting with using it to generate reference lists on specific research topics. It consumes many tokens, takes ten minutes or more, but the product is genuinely impressive, broad coverage of a typical ~500 sources and more, useful summaries, good detail. You can even ask it to verify DOIs before writing the final report. It cannot replace years of deep research experience and insights, but it will quickly help you fill the knowledge gap of any new topics that may interest you. I typically will pick a paper, append the abstract with “please do a deep research on this topic; give me a peer-reviewed reference list; verify DOIs; I only have time to read 10 papers, ranked your reference list by impact; return to me a .md report; take you time.”

The information barrier is gone. So where, as researchers, do we bring in value? Judgment? I’m not sure. But what I notice is that when I now face an unfamiliar topic, I have no mental barrier to entry. The learning process has accelerated dramatically.

AI is excellent at filling knowledge gaps. But genuine insight still takes time and hard work. Maybe the answer resembles the investment question above: focus on the long term, something fundamental, and probably more important something you are interested in. As I see it, speed doesn’t take away insight. They don’t conflict with each other. Effective iterations bring insights and fast turnarounds many actually bring insights you expect early.

To close, I return to the epigraph of Robert M. Pirsig’s Zen and the Art of Motorcycle Maintenance, “And what is good, Phaedrus, And what is not good, Need we ask anyone to tell us these things?”

Nurture your judgment. Cultivate your aesthetic taste in your work. That is what AI has not yet taken over, and what I hope and imagine it will not, at least not anytime soon.

Bio for the Author

Dunyu Liu is a senior computational geoscientist at the Institute for Geophysics at the University of Texas at Austin. More on his research interests can be found here.