In my last post I had some success with a world model in a synthetic toy domain and my next step was applying what I learned to simple real world robot data. Here are some results that demonstrate action-conditioned predictions on data from a SO-101 arm using a GPT inspired model as well as a diffusion transformer (DiT) based model.

I initially tried the model from the toroidal environment adapted to the SO-101 arm data, but didn’t see much success. There were several different experiments and changes made to the training procedure that included sampling higher error data more often, to different learning rate schedulers like reducing learning rate on plateau, changing stopping criteria from fixed budgets to validation/training loss divergence, incremental training on larger and larger subsets of data, and adding learning rate sweep throughout the training process. You can look at the commit history of developmental-robot-movement from January 7, 2026 to March 8, 2026 to see more details.

Despite some signs of action conditioning after fixing data quality issues, continued experimentation didn’t yield much and progress felt slow to non-existent.

I had recently read the essay As Rocks May Think by Eric Jang where he described his process of having Claude Code set up and run his experiments. About a month later Andrej Karpathy started publicizing his efforts with automated research and I decided to give it a try. I prompted Claude Code to run training, read a generated report, make changes to improve the model and repeat. I started to get better results right away after Claude finished its first experimentation session.

Instead of taking a day or more trying out a variation of a single idea, Claude Code could spend the night trying out 10+ variations or multiple ideas resulting in performance gains almost every time or finding the limit of a particular idea.

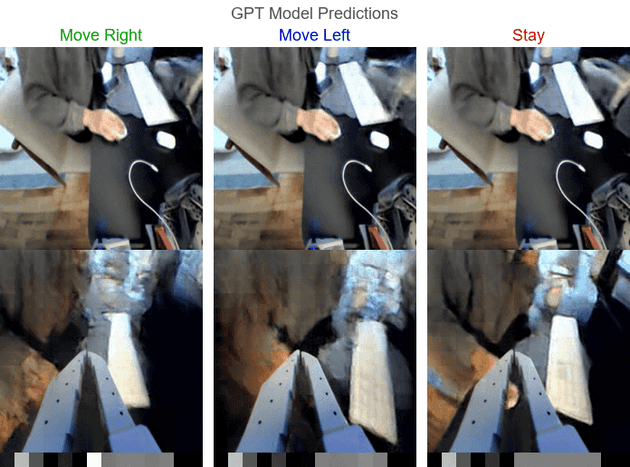

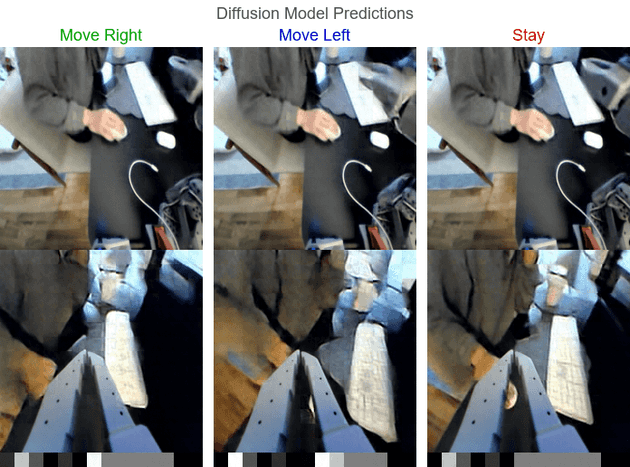

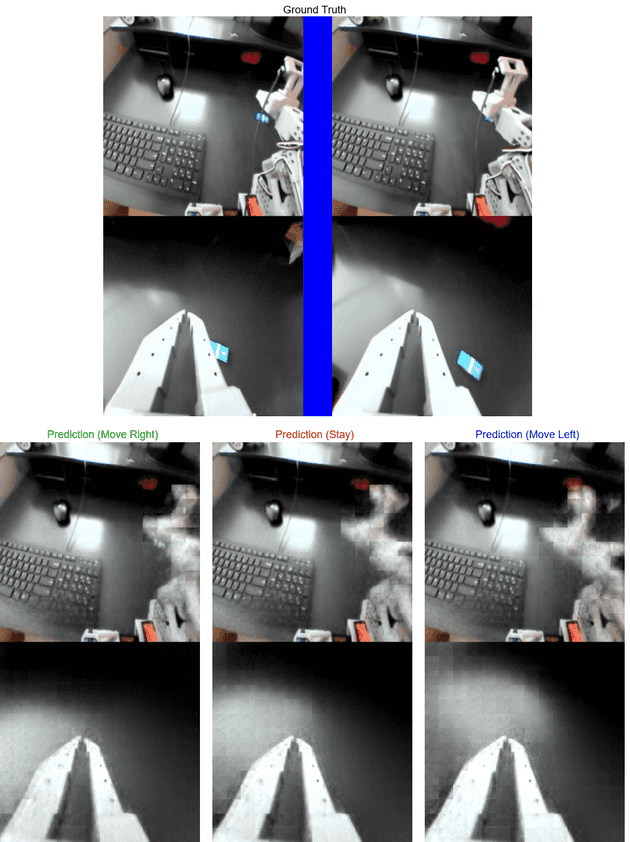

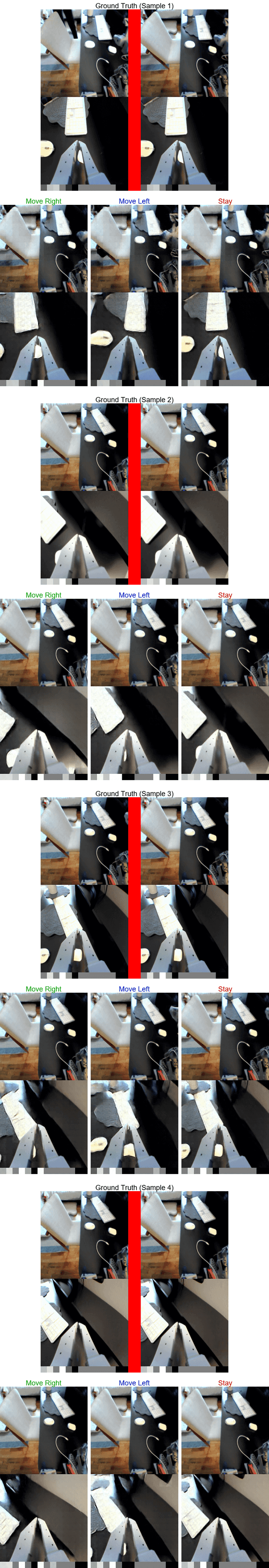

After exploring several high-level directions the results strongly suggested the limiting factor was the amount of data. At this point I decided to create a separate more minimal repo canvas-world-model for running training runs via Claude Code. I collected more data and tried more models like a more GPT inspired model as well as Claude Code finding a variation of the diffusion approach that worked.

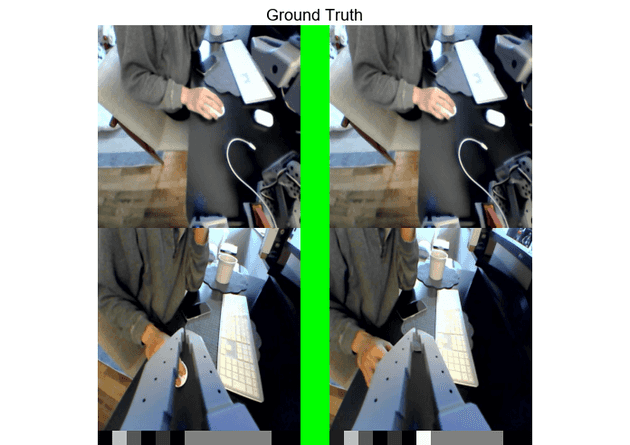

I also added motor position embeddings to the canvas (seen as the grayscale squares beneath the image frames in the diffusion model examples below).

You can see the GPT experiment summary, diffusion experiment summary, and hold experiment comparison for more details.

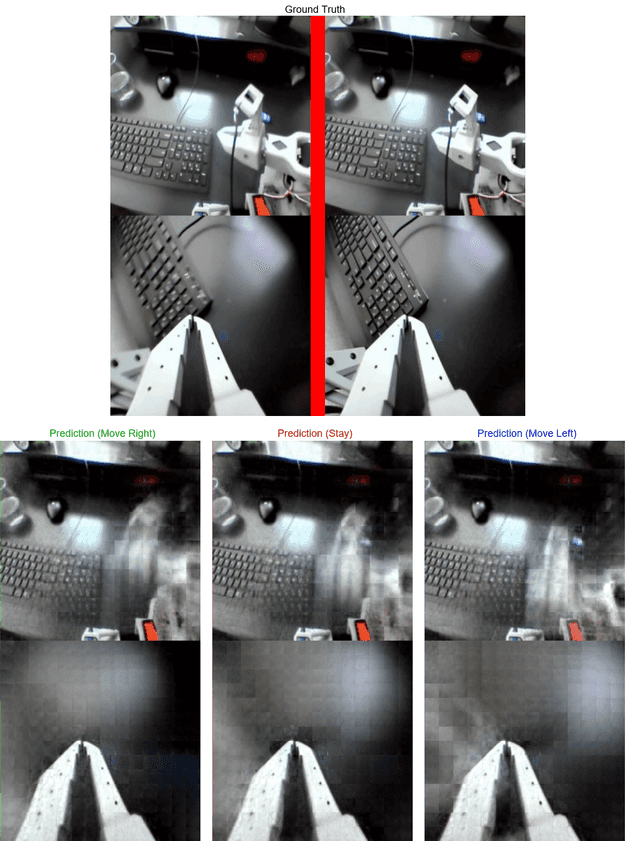

I’ll continue working with the DiT models for next steps since the image quality is relatively good compared to the other models and the inference is much faster than the GPT style model. Here are some examples of counterfactual inference from the best DiT model.

There’s still a lot of room for improvement in the predicted frame image quality, but I think the action conditioning is clear enough to try to use the model in a robot control task. My next goal is to close the loop and try to get the SO-101 to center its view on an object using the world model.