Here we are. 2026. A strange time for the tech industry.

As I wrote in my prior post, “It’s official: 2026 is a weird year for tech and programmers”:

In January, I wrote a draft of a blog post on this weird new coding world ever since the widespread adoption of Claude Code. I shared that draft with a few programming colleagues. It led to probably the most pre-publication commentary I’ve ever received on an essay in this blog’s entire history. Which is something, in its own right. This is another small anecdotal data point that shows me that my colleagues are entering a very different era.

You are now (finally) reading that post. After thinking through 40+ thoughtful comments from 10+ programming colleagues, I thought hard about their feedback, and how things changed in the rapid cycle between January and April 2026. Things keep getting weirder, but I think now the dust has settled enough that I can put this out there. So, I’m finally publishing some of my thoughts here.

As someone who has worked in tech for awhile, the industry has often had a certain strangeness in it — after all, this is the industry that inspired the hilarious “Silicon Valley” TV show, a show that becomes more and more like a documentary with every passing year.

But 2026 is especially strange.

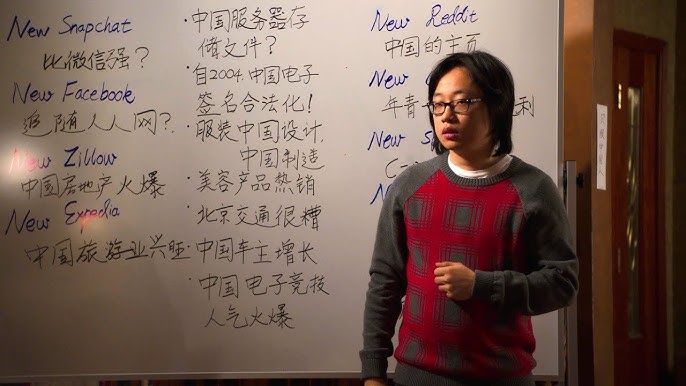

Jian Yang, a character in the TV show “Silicon Valley.” This is a scene from an episode in 2014 wherein he’s working at a whiteboard on his “new internet” startup ideas (that is: imitation knock-offs) to try to build and roll out in China.

Let’s talk about programmers. My people. Especially open source programmers and programmers-turned-startup-founders.

Why have I always loved this group? Because we tend to live in the future.

Putting aside the biggest corporations associated with Silicon Valley (going by strange names like FAANG or MAG7), the broader universe of Fortune 500 and Fortune 1000 companies tend to take years or decades to adopt new technology. They always do so in a risk-averse way.

The even wider universe of professionals (e.g. lawyers, doctors, accountants) and the broader public also tends to be slower on the uptake of new technology.

But not programmers. Programmers dive right in.

This is part of the reason why venture capitalists like to keep some programmers in their network — they hope they can get a glimpse of a future that is soon to arrive.

Paul Graham famously got ahead of the entire tech industry by convincing young programmers to start startups rather than working for large corporations, a model that became YCombinator.

Programmers were early adopters of the internet, email, the early web, embedded devices that led to smartphones. Programmers were early adopters of remote/async/distributed work models, digital collaboration, social media, SaaS.

Before Twitter/X, Reddit, and YouTube there were programmers sharing ideas and software on Usenet, IRC, listservs, and so on. Before there were professional SaaS tools like GitHub, there were self-hosted servers running open source bug tracking and version control software.1 Before SaaS tools like Notion, there were self-hosted wikis.2

Before Covid-19 forced the whole world to experiment with remote collaboration, one of the most complex computer programs on Earth, the Linux kernel, was already developed by a worldwide distributed team of software craftspeople.

And on and on.

For most of my career, which has operated in parallel to the recent decades of software industry development, we have seen programmers doing it first, and the rest of the world eventually professionalizing the hacky technologies they built into something that could make sense to the general public.

What makes 2026 strange is that there is quite the acceleration of this process. Sometimes called “crossing the chasm,” it usually takes years to happen. But here we are in 2026 and right before my very eyes, within the span of the last 6-9 months, “agentic coding” has gone from a piece of applied research adopted by programmers to a core platform wars bet of the major AI/LLM companies.

I mentioned that programmers usually live in the future. How so at this very moment? The biggest split could probably be described thusly:

- In the last 2-3 years, the mass of hundreds of millions of non-programmer general internet users adopted ChatGPT (and its ilk) as a weird alternative to Google, and as a new helper in certain life inconveniences — for example, students are using it for homework in lieu of copying off a friend; adults are using it to auto-write polite business emails in lieu of hiring a virtual assistant; the anxious are using it to appease internal brooding in lieu of a remote therapist.

- By contrast, in the last 6-9 months, programmers started using tools like Claude Code to ship entire working software codebases, 99.9% of whose code they didn’t themselves write. The agent wrote the code for them in “agentic loops” using nothing more than natural language prompts — and, perhaps also, a skeletal/initial code or architecture sketch — as a starting point.

Here’s the key idea: the contrast between these two kinds of use cases could not be greater. Even though the underlying technology is mostly the same.

For programmers, the effectiveness of Claude Code has inspired both excitement… and dread.

My programmer colleagues now constantly chatter about it. Here are some of the sentiments they express:

- Will programming ever be the same?

- Did I waste years of my life learning the intricacies of code, given that an AI/LLM model can now write well-structured code from natural language prompts much quicker than I can?

- Do I need to refashion myself as a manager of AI/LLM models and shift my job to being primarily about code review and integration, since the act of writing code is now commodified?

- If these models get better in unforeseen ways, will programmers even be needed at all?

You can also find groups that are full of newfound joy — and even some groups full of what might seem to be irrational exuberance. In those circles, you’ll hear things like this:

- I was just given an army of junior programmers, effectively for free — I can build pretty much anything I want, now!

- The barrier to entry for product startups has been dramatically lowered, I can ship amazing products in a fraction of the time now!

- All of my side projects used to be limited by the friction of finding a full day to sit down and write the code — I can prompt my side projects into existence, now!

One would think that the availability of these capabilities might take a few years to work its way through academia, government, and the corporate world. But, Anthropic drew an interesting lesson from the rapid growth of Claude Code among programmers: they might simply be on the bleeding edge of a mainstream adoption cycle, much like they were in past eras.

Competitive tech innovation races are, of course, nothing new. In the land of AI/LLM models, we have a grand race, indeed. Focusing only on the top 4 in the US, you have a battle waged in billions of R&D spend and trillions of data center spend. That is: the dueling ideas and massive server farms behind OpenAI ChatGPT, Anthropic Claude, Google Gemini, Microsoft Copilot3.

But, Anthropic did something special here. They noticed the rapid adoption of “agentic coding” tools — namely Claude Code — and predicted that making “agentic coding capabilities” available to “mere mortals” via a non-coding interface will accelerate the usual tech adoption cycle. What’s more: because agentic coding capabilities can be used to build such software, they can attempt a rapid recursive self-improvement loop here. And so they did. The result was Claude Cowork being built in just 10 days4, mostly with the help of Claude Code as an agentic coding agent.

Claude Cowork has now been imitated by OpenAI in their Codex product (under the mantra, “Codex for Everything”), such that the two major platforms have both shipped desktop apps that can provide a natural language interface atop on-the-fly coding capabilities (with secure sandboxing approaches) without the layperson needing to understand this sentence/paragraph nor having to reason about the generated code they produce, under-the-hood.

When you ask Cowork or Codex to do work for you, the model decides whether to respond from its pre-trained world model, or whether to write a computer program that solves the problem for you, run it in a sandbox environment, and then use its pre-trained world model to reason about its output to tell you what it did and what the output/artifact means.

Anthropic has been on a product development spree, lately, with this approach. They shipped a Claude Add-on for Microsoft Excel that uses this approach to generate JavaScript code in response to user queries that execute against Excel’s JavaScript API. And then they did the same thing for Microsoft Word and Microsoft PowerPoint.

Do you see what’s happening here? “Software is eating the world” but in a way that we didn’t quite expect. It’s not SaaS that’s eating the world. It’s JITS: Just-In-Time-Software.

Meanwhile, college students are rapidly adopting AI/LLM models for classwork. The non-programmers among them are debating whether learning how to program is still a “good investment.” The conventional wisdom seems to be that it is not. It was unequivocally a good investment in 2019-2023. This is when Computer Science programs had historically high enrollments, up over 3x since their trough in 2006. But after years of the mainstream press writing articles pondering whether programming is “solved” by AI/LLM models and speculating about rapid job loss for programmer roles? With that backdrop, CS enrollments at undergraduate colleges are in rapid decline.

So, at the same time that the capability of senior programmers has never been greater, the arrival of these tools has disrupted the incentives and economics of training the next generation of programmers.

And how about major Fortune 500 and Fortune 1000 corporations? Outside of Silicon Valley, corporations are rushing to have an “AI strategy” and to roll out AI/LLM models on their teams, even without fully understanding what the ultimate goal might be of rolling out such a strategy. Established professionals are being pressured to adopt AI/LLM tools as junior assistants even though we don’t yet fully understand the risks or benefits. Many established professionals are also using the tooling “on the down low” regardless of employer policy.

Within Silicon Valley, though? There is a mixture of “tokenmaxxing” experimentation and defensive posturing meant to push the existing software engineering talent even harder for output, while hiring for junior engineers is much reduced, or even frozen.

So now we can discuss what makes things particularly weird. Here’s the key difference I’ve noticed for how programmers see the industry now rather than in past cycles.

In the past, the industry machinations meant that programmers had to learn new programming tools to get access to their users. For example, during the smartphone platform wars, Android development (typically with Java and later Kotlin) and iOS development (typically with Objective-C and later Swift) became new important skills for accessing those users. But the truth was, nothing fundamentally shifted about how software development and knowledge work was done during the rise of smartphones. We still built programs, which involved gathering specifications, designing interfaces, writing code, and testing code. Whether they were desktop software programs, web applications, or mobile applications, the nature of software development was fundamentally the same.

In this cycle, the fundamental nature of software development is changing. That has never happened before.

Yes, there is still the platform audience problem: how do you address users who might expect natural language interfaces? Or, how do you attract users in an era of declining web traffic — due to declining search engine usage?

But that’s not the thing on every programmer’s mind that I know. What’s on their minds?

“Software development itself has changed forever, and is changing rapidly before my eyes. How do I cope?”

It’s leading to a lot of hand-wringing and existential questions.

Let me wax poetic about programming for a moment.

Programming is a craft, like writing.

But, unlike writing, the point of programming isn’t necessarily the code — it’s the product. That is, programmers rarely read code “for fun” (there is no such thing as a “fiction genre” for code). Perhaps even more surprising, programmers also rarely read code “for information” (that is, there isn’t a “non-fiction genre” for code, either). Instead, code is read so that it might be changed.

Code is read to understand it, in order to change it. And the reason we programmers aim to change code is to improve the product it powers.

You probably don’t want to read AI slop fiction because part of your aim when you read fiction is to experience the subjective reality of the human author who wrote the fiction. But is there any issue using a product that, under the hood, is AI slop code — if the software product fully works the way you expect and solves a problem for you? Certainly not. After all, people have been using proprietary software for decades — which is to say, software where, for all they know, the underlying code could have been entirely generated rather than written by hand by humans.

For many years, we in the software industry believed programmers had a truly privileged position in the economy, myself included. We not only got to work on a craft all day at work, but our craft “worked” to produce economically valuable products. Software companies could be artistically motivated on the inside and economically valuable on the outside. And often the best ones were. That entire framing is now in question.

It is not just the commodification of labor value that is scaring my fellow programmers.

It is the idea that the nature of the craft has been obliterated. And that this is a one-way door.

On the other side of the door is a world where programming is no longer a craft and code, per se, no longer matters. Where all that matters is the ruthless efficiency of shipped product.

There are other creative industries that have the same anxieties, but the two biggest ones that have the most striking parallels are film and music. These were “commercial arts” where technology was always in a supporting role to the human creatives, but where a certain strain of generative AI might aim to suck the humanity out of them altogether in the name of ruthless efficiency.

Here’s the thing: whatever we fear might happen to the film and music industries in this era, it will happen to the software industry first.

And the AI platform companies have discovered that programmers are an interesting early adopter market for their wider knowledge work automation capabilities.

This all makes sense when you start to think deeply about it.

Software is a closed system of mostly non-subjective artifacts. What makes a film or piece of music good is how it makes you feel, how authentically it charges at your human spirit. But what makes a piece of software good is “merely” (and usually) how well it works.

The economic system also “values” bespoke working software (to solve pervasive and repetitive problems) in a way it simply doesn’t “value” bespoke music or bespoke film. So the forces of the market, as well as the structural nature of the software industry, will drive toward changes here before the other creative fields are altogether disrupted. As a former colleague, Keith Bourgoin, put it when commenting on an earlier draft of this essay: “Humanity needs art. It shows up in every time and culture. There’s even occasionally a ton of money in it. But, it just so happens that software has permeated the entire economy and is verifiable. So it goes first.”

It is in this way that my colleagues and I all feel we are standing on a fragile precipice. We excitedly spin open our code editors and terminals and marvel at what the AI/LLM models can do with us. We have never been more empowered or productive than we are now.

If anything, the only thing that can hold us back in 2026 is our lack of focus on projects that really matter to us, due to the excitement of being able to make progress on any project that comes to mind for us.

But it’s very weird to realize that our jobs have been forever changed.

It’s very weird to realize that a big part of our job now consists in communicating with an alien technology we don’t fully understand — and that we can never be expected to understand at any deep level. It’s weird for us to have so much power with so little effort. It’s weird to consider that the next generation of programmers will never understand code at the deep level we do, because they won’t be forced to learn programming languages since deep fluency in them is no longer a barrier-to-entry for shipping working software.

It’s also very weird for us to enter an era where the spells we used to cast as wizards — files full of often-lovingly hand-crafted code — are now just an intermediate artifact in a multi-stage process to automate the whole knowledge work economy. I once wrote a popular code style guide for the Python programming language and published it to GitHub. Rather than this style guide being a discussion point for human programmer teams, it’s now much more widely adopted as “just some weights” in training data, floating in the ether. I guess thanks to AI/LLM coding models, it is subtly improving the style of huge piles of generated code. But does the style of that code even matter? After all, it might be written once, run once, and then thrown away forever.

That’s very weird — and not at all what I expected for this industry.

And you know what else is weird? I’m excited anyway. I’m having fun anyway. I’m in the irrationally exuberant5 camp above.

Ever the techno-optimist, I know that my startup is getting an advantage here. I know being a startup founder in the dev tools space is exactly where I need to be. And I can’t wait to see what we build from this new vantage point on code.

But I’m also scared. Because I know we haven’t thought this all through. And I know that coding models are just an early adopter wave, that I’m competently surfing, as I usually do for new technology waves. But the wave keeps getting taller. And the non-programmer laypeople on dry land below me just go about their business, unbothered by the growing shadow.

Note on my dev tools startup, PX Systems

I don’t feel comfortable making grand & sweeping predictions about how developer tooling is going to change in 2026. But, I’m working on my own contribution — solving a problem at a much lower level than AI/LLM models — with my new startup, PX Systems, which provides a daily developer tool for going from code to cloud cluster in seconds.

That tool — centered around a command-line utility called, simply, px — makes building a cloud cluster as easy as running px cluster up and executing code on that cloud cluster as easy as running px job submit. Our bet is that programmers and AI agents will have an easier time than ever going from “zero to one” on their backend coding projects, but they’ll still need deterministic tooling to get human-written or AI-generated code running reliably with just-in-time provisioning of distributed cloud hardware.

I discussed the thinking behind this new startup in a prior blog post.

Acknowledgements

As I mentioned at the top, this essay benefited from a round of revisions after sharing an early draft with many former and current programming colleagues. I want to thank and acknowledge Evan K, Rom D, Nelson M, Cody H, Jay G, Sal G, Keith B, Will B, Annelise L, Hannah W, Flavius P, Arthur S, Daniela B, and Chris W. Each of them reviewed an earlier draft, and all of their comments influenced my thinking in this draft. I also want to thank Evan K, Keith B, Jay G, and Rom D in particular, as I had a hearty real-time 1:1 discussion with each of them on this topic, and each conversation challenged my thinking in new ways.

Footnotes–