What happens when an AI hallucination leads to bombing an elementary school?

It appears likely that the US government is using Anthropic, OpenAI, Google and/or xAI data models for processing signals intelligence (SIGINT), for AI-generated “kill lists” to determine where to drop their bombs.

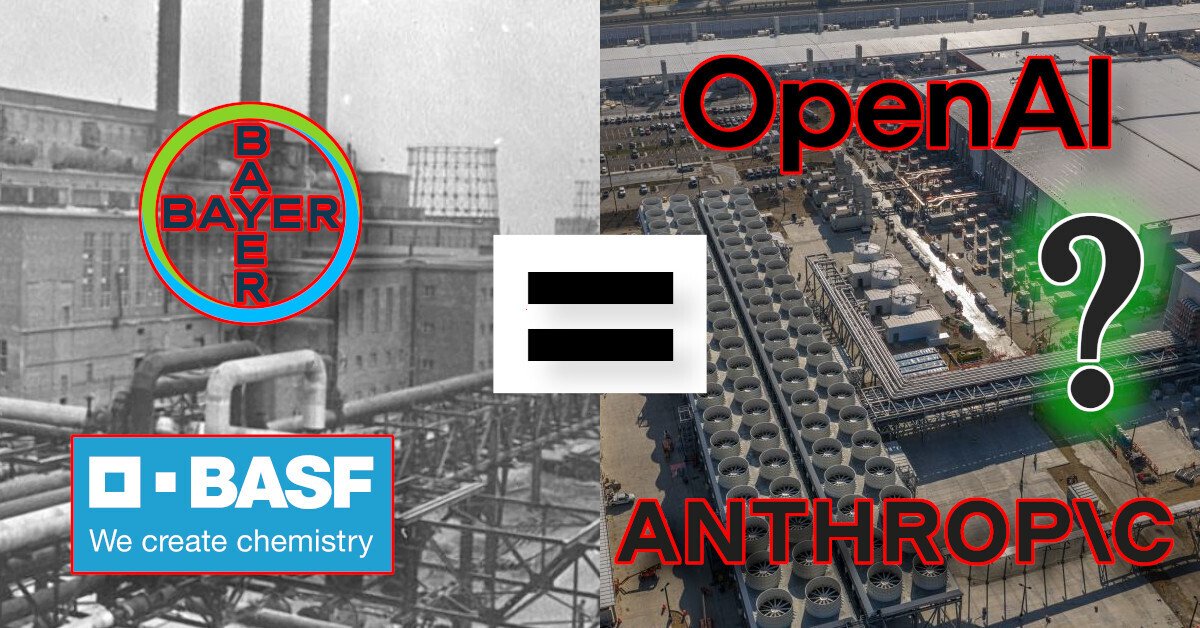

[right] This AI datacenter is a machinery of war. Its LLM hallucinations decide which children to assassinate [left] This IG Farben (Bayer/BASF) factory in Auschwitz produced Zyklon B for the Nazis, who murdered over a million children

In Apr 2024, +972 (an Israeli news outlet) published a >9,000 word article describing how the Israeli military had been using Artificial Intelligence to decide which (residential) buildings, hospitals, and schools to bomb in Gaza.

In Feb 2026, the US (and Israel) bombed Iran — killing over 100 schoolchildren (and Ali Khamenei).

it appears that the US has likely built a similar system, leveraging US AI companies’ tech to decide which (school) buildings to bomb, false-positive hallucinations be damned.

In Mar 2026, it appears that the US has likely built a similar system, leveraging US AI companies’ tech to decide which (school) buildings to bomb, false-positive hallucinations be damned.

Who targeted the Shajareh Tayyiba girls’ elementary school in Minab, Iran? Could it have been an AI hallucination? A false-positive?

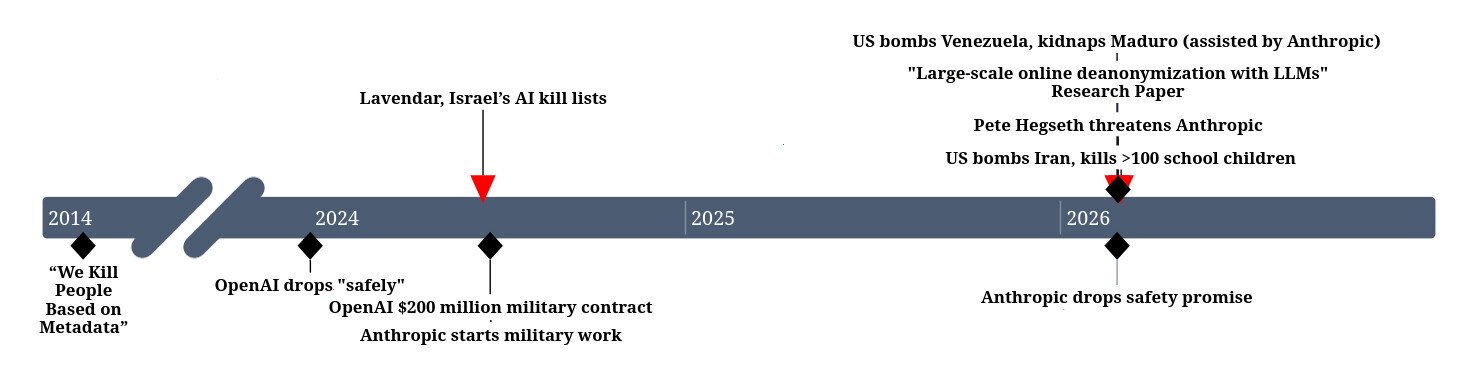

| Date | Event |

| 2014-04-07 | General Michael Hayden says “We Kill People Based on Metadata” |

| 2024 | OpenAI drops “safely” from mission statement |

| 2024-04-03 | Yuval Abraham exposes Lavendar, Israel’s AI kill lists |

| 2024-06-17 | OpenAI gets $200 million as military contractor |

| 2024-06-24 | Anthropic becomes a military contractor |

| 2026-01-03 | US bombs Venezuela, kidnaps Maduro (assisted by Anthropic) |

| 2026-02-24 | Anthropic ditches its core safety promise |

| 2026-02-25 | Large-scale online deanonymization with LLMs research paper published |

| 2026-02-27 | Pete Hegseth threatens Anthropic |

| 2026-02-28 | US bombs Iran, kills >100 school children |

In a now-infamous panel, Ex-NSA Chief Michael Hayden said “We Kill People Based on Metadata”

In April 2014, in a discussion following Ed Snowden’s whistleblowing, the Ex-NSA Chief (and US military General) Michael Hayden publicly said (on camera!) very clearly about the US: “We Kill People Based on Metadata”

Ten years later, in April 2024, we learned that the Israeli military was feeding metadata into AI systems, which would flag people for assassination. It was further reported that the humans responsible for double-checking these “AI-generated kill lists” were effectively just a rubber stamp — they routinely bombed where the AI said to bomb (killing women an children) without first checking if the target was legitimate (or an AI hallucination false-positive).

The metadata processed by the Israeli AI system (to determine who to bomb) included:

- Your location (eg sleeping in a different location frequently)

- Your fingerprints changing (eg changing a phone frequently)

- Your social graph (eg being in a whatsapp group with a known militant)

This. is. terrifying.

In case it’s not obvious to the reader, this sort of system is inevitably full of false-positives. As a privacy-conscious digital nomad myself, this system would surely identify me (yours truly, the author of this article) as a target for assassination.

It also (partially) might explain why so many journalists have been killed by Israel (and, likely, pizza/shwarma delivery drivers). Just because a known militant messages you on whatsapp does not make you a militant.

On 2026-01-03, the US bombed Venezuela, murdering civilians and kidnapping the country’s leader, Nicolás Maduro.

On 2026-02-28, the US bombed Iran, murdering civilians and the country’s leader Ali Khamenei.

“Anthropic has supported American warfighters since June 2024”

Source: Statement on the comments from Secretary of War Pete Hegseth by Anthropic

In the ~2 months between these two crimes of aggression, there was a lot of controversy between Anthropic and Pete Hegseth (US Secretary of Defense). And we also learned that Anthropic assisted the US government in their unprovoked attack on Venezuela in January (via the cybermercinary company Palantir).

Anthropic has been a military contractor since June 2024, but even Anthropic themselves said their own systems are unreliable and full of too many hallucinations (false-postiives) to be used.

“we do not believe that today’s frontier AI models are reliable enough to be used in fully autonomous weapons. Allowing current models to be used in this way would endanger America’s warfighters and civilians.”

Source: Statement on the comments from Secretary of War Pete Hegseth by Anthropic

It’s important to note that, also between these two attacks, Anthropic ditched its core safety promise, and we learned that OpenAI dropped the word “safely” from its mission statement.

And then, sortly after this controversy hit the press, the US bombed Iran.

3 days before the US bombed Iran, this paper was published

On 2026-02-25, 3 days before the US attacked Iran, Anthropic and researchers at ETH Zurich co-published an article showing how their LLMs could be used for highly effective deanonymization attacks.

In the attack on Venezuela, the US was physically targeting Nicolás Maduro.

In the attack on Iran, the US was physically targeting Ali Khamenei.

It’s likely that both of these targets were trying to keep their physical location unknown to the US military. It’s likely that the US military used AI to process their panoptic datasets to guess where they were located. It’s likely AI suggested several possible targets.

And it’s likely the targets that AI recommend bombing included false-positives, possibly including schools.

Jay (police) recognizes an anomaly in an 8-year-old girl, because she is carrying books on Quantum Physics. After detecting the anomaly, he shoots her in the head.

We all know that AI is not intelligent. In the best-case, it spews (hallucinates) misinformation. In the worst case, it praises Hitler and denies the holocaust.

What happens when you feed an AI system all the metadata collected from a foreign adversary, and ask it to identify military targets? Does it sometimes tell you that 8-year-old “little Tiffany” should be shot in the head, because she’s displaying some anomalous behavior (carrying some books on quantum physics?)

Unfortunately, this isn’t sci-fi anymore. Governments are actually relying on these broken AI anomaly detection systems to decide where to drop their bombs, and real children are dying.

This AI datacenter is the machinery of war. Its LLM hallucinations decide which children to assassinate

When the world saw the horrors of mustard gas, the international community got together and agreed to stop using chemical weapons in war.

Neural Networks and LLMs hit the world by storm. They’re new (immature) systems that are causing innocent people to die by the tens of thousands.

I think it’s time for another international convention to address these issues. We need to ban the use of AI by police and militaries.

We need to establish that the use of AI in war is a War Crime.

We need to establish that the use of AI to target civilians is a Crime Against Humanity.

And, finally, we need bodies like the ICC and ICJ to prosecute criminal (ab)use of AI.

Start Local

Don’t have influence at the United Nations? Well, you do have influence in your own city.

Write your city representatives. Tell them that you want them to pass legislation that bars your local police from using AI.

Once you win at the city level, write your federal representatives. Tell them that you want them to pass legislation that bars your own military from using AI.

There is hope. In 2023, the EU had the foresight to pass legislation that restricted the use of AI from high-risk applications. When more countries globally get on-board with such legislation, we can finally come together as a world and ban AI from being used in war.

- ‘Lavender’: The AI machine directing Israel’s bombing spree in Gaza by Yuval Abraham

- Report: Shajareh Tayyiba girls’ elementary school in Minab bombed by Mahmoud Aslan (Drop Site News)

- Algorithmic Justice League (Unmasking AI harms and biases)

- Committee to Protect Journalists’ report on Israel’s murdering of Journalists in Palestine

- Large-scale online deanonymization with LLMs

![]()

Hi, I’m Michael Altfield. I write articles about opsec, privacy, and devops ➡