DISCLOSURE: If you buy through affiliate links, I may earn a small commission. (disclosures)

For the past several months I've been searching for the Missing Programming Language - a language with a good balance of types, performance, ecosystem, and agentic AI performance. I've landed on a specific flavor of Rust - High-Level Rust - which gives me 80% of its benefits with 20% of its obstacles.

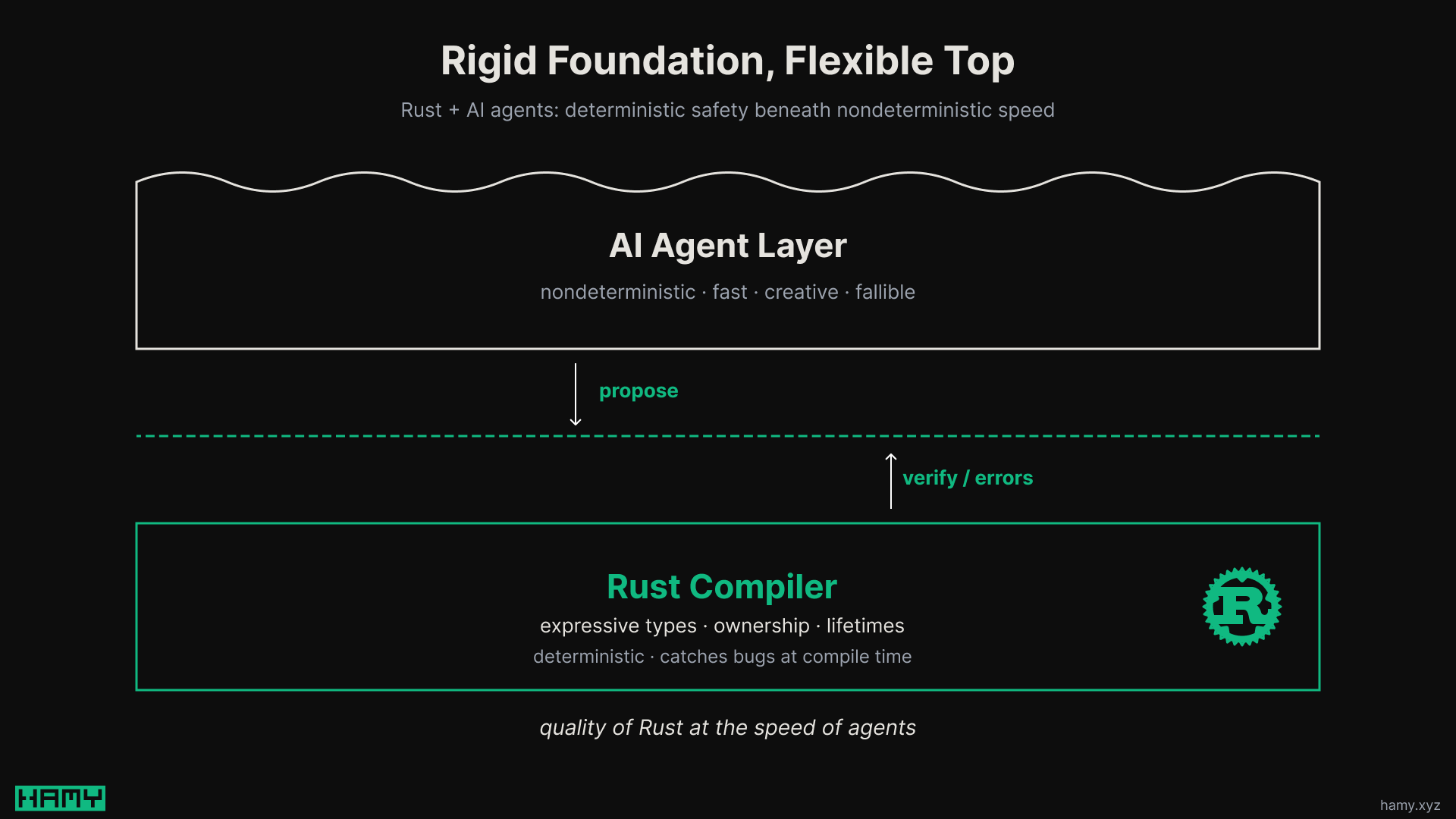

In this post I want to share why I think Rust is uniquely positioned for agentic engineering - providing a deterministic foundation upon which the nondeterministic models can build.

What are agents good for?

AI agents are good at coding. You can give them a task and they can return working code. It might not be exactly what you wanted nor how you'd have done it yourself but generally the code works.

This has led to the rise of agentic engineering and is a big productivity unlock because you can now compress coding time. You can kick off multiple agents in parallel on the same or different tasks then go do something else while they work.

Coding may not have been THE bottleneck in every product lifecycle but it was A bottleneck and compressing it has led to huge changes in what product teams look like - smaller teams with more agents, prototypes over static plans, and validating faster in prod.

The problem (and power) of agents is their nondeterminism. Like a human they need a deterministic runtime/compiler/linter to double check their work to ensure it's working as expected.

This is where Rust comes in.

What is Rust good for?

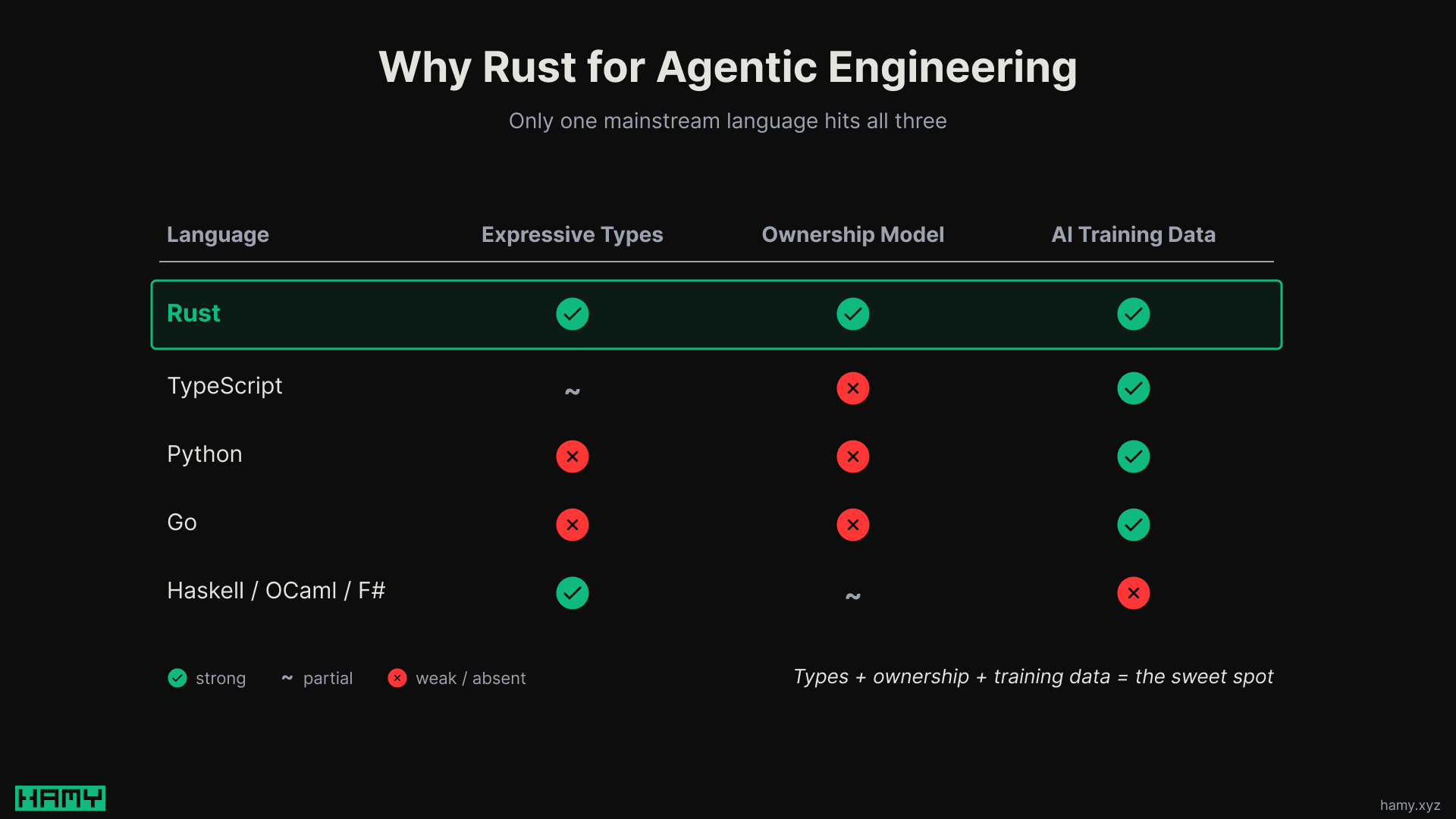

There are many programming languages and tools that could provide this deterministic checking layer but Rust goes a bit further than any other mainstream language.

- Expressive types - Allow precise modeling of the domain, making invalid states unrepresentable. This allows you to setup guardrails to prevent many common bugs in programs.

- Ownership and lifetime rules - Rust's data ownership and lifetime rules help prevent many kinds of runtime bugs that other languages allow, from unexpected mutations to data staleness.

Rust's compiler and ecosystem of tools doesn't catch every bad outcome. But it does tend to push you into a pit of success, narrowing the opportunity space such that more outcomes are good ones.

Rust + Agentic Engineering

Rust is known for having a high learning curve and slow iteration velocity compared to other high level languages. But when you understand it, it tends to lead to better quality systems because more classes of bugs are prevented.

AI agents are known for producing code very quickly. It generally works but often misses on quality or direction.

By putting them together, you get a:

- Deterministic foundation - Pushing towards quality

- Nondeterministic agent - Pushing quickly towards change

This gives you the reliability of Rust at the iteration speed of agents which is a pretty powerful combination. SWE-bench Multilingual found this to be true with Rust completing 58.14% of tasks given to it, the best across 9 languages.

One-shot is a different story - for generating code from a single prompt, dynamic languages like Ruby and Python are 1.4-2.6x faster and cheaper than Rust (via ai-coding-lang-bench). But agentic work isn't one-shot, it's iterative, and the compiler loop is what pays dividends - especially in larger, more mission critical codebases.

I kind of think of it like agents get us so much speed, why not sacrifice some of it to maintain (or even gain) quality. Slow is smooth and smooth is fast or move fast with stable infrastructure.

Note: This idea of a balance of rigid + flexible I think is a universal pattern for Atomic Systems.

None of this solves the direction portion but that's typically where the driving human steps in (at least for now).

What about other languages?

I think there are several other languages out there that have similar attributes but Rust is a better fit than those in the current landscape.

One of the biggest critiques I get on my approach to High-Level Rust is that this approach could be much simpler while still getting most of the benefits by switching to a different high level language that has expressive types - e.g. F#, OCaml, Scala, Roc, Lisette, Gleam, Haskell, etc.

I think this is largely true but AI tends to be pretty bad at them, this is one of the big reasons I started moving away from F#. A 2026.01 study (FPEval) found LLMs generate non-idiomatic, imperative code in functional languages like Haskell, OCaml, and Scala even when the output is correct.

There are many reasons for this but the main ones from the various benchmarks seem to be:

- Training data volume - 37.4% (via paper) - More training data leads to better idiomatic code production / understanding of available libraries, tools, and approaches. According to the 2025 Stack Overflow Dev Survey, Rust is a top 15 language with ~15% of respondents using it while the others are <3%.

- Syntax simplicity - <10% - The simpler the syntax, the less iterations AI needs to make on writing it correctly. Rust is typically bad at this.

- Actionable compiler errors - <10% - The better the errors, the better AI is at iterating towards a working solution. Rust is typically good at this.

These other languages arguably have simpler syntax than Rust and potentially similarly useful compiler errors but they have nowhere near as much training data. I think this could change if one of these gets very popular or AIs reason better allowing them to "learn" a language over time or an AI lab takes a particular interest in improving a given language's performance (though this typically is proportional to the usage of the lang to start with). But I see this as a chicken or egg problem and thus why small languages may face an AI-driven death spiral - less users -> AI is worse at them -> less users.

Next

I've been learning and building with Rust the last few months using agentic engineering and have had a great time with it. Rust has most of the attributes I was looking for in the missing programming language but it suffers from devx in terms of iteration velocity and learning curve. But agentic engineering coupled with strategies like High-Level Rust have largely removed that devx barrier, allowing me to write it as fast as a high level language while still getting the quality benefits of the Rust compiler.

If you're curious how I'm building webapps with Rust, you can check out CloudSeed - my fullstack Rust boilerplate.

If you liked this post you might also like: